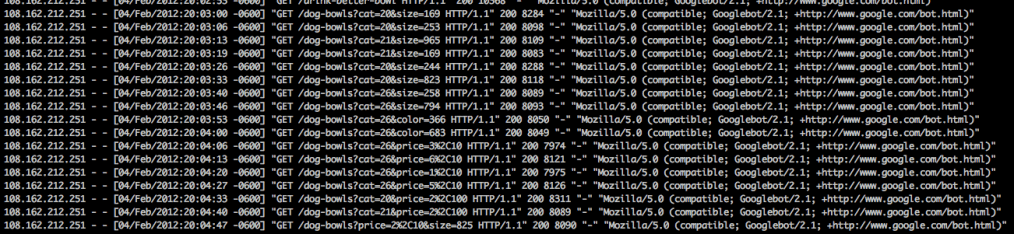

Googlebot loves to crawl: it’ll crawl any thing that looks like a URL, anything it can find in javascript, html, or on the page. If it looks like a URL, Googlebot will try to crawl it. Great for Google, probably great for web users because Google learns more about the web, but it can lead to wasted crawls for web owners.

As I mentioned in a postion about initial SEO decisions for The Dog Way, I blocked all category pages. I’ve loosely monitored Google’s crawls and found them just crawling any available URL: pagination, sorting by size, price, color, et al.

(For those that look at log files, you’ll notice the IPs aren’t Googlebot IPs, we’re using Cloudflare to try to speed up the site and all requests come through their IPs.)

Now I need to find all of the URLs I should have blocked, but didn’t. Very, very simple unix command: wget -O- url | grep urlpath.*\” | sort | uniq

(What I actually ran: wget -O- http://thedogway.com/dog-boots-and-shoes | grep -o dog-boots-and-shoes.*\” | sort | uniq)

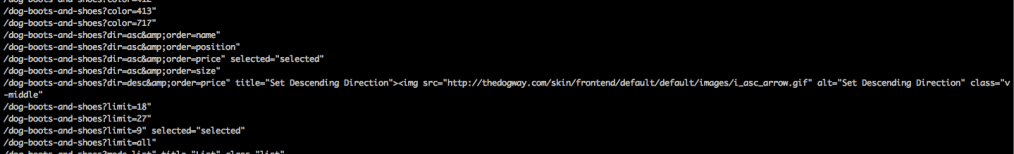

Here’s what the output looks like:

To break that down.

wget -O- url: wget is a program to download files, by default it’ll save the file in the current directory. The -O- tells it to redirect to the stream output. The url is the url to download.

grep -0 urlpath.*” : urlpath in this case is the the part of the URL after the domain. In this case it was dog-boots-and-shoes.*\” (the \” is a way to escape out the ” to treat it as only one “). The ‘-o’ outputs just the text that matches and nothing else.

sort | uniq : sorts the lines and then just outputs the unique ones.

What were the key takeaways from that? I need to improve the handling of pagination, prevent Google from crawling: limit, dir=, size=, and color=. The /p/ are products and are already blocked in robots.txt. The really simple changes are to just block them all in robots. Other options are configuring URL parameters in Google Webmaster, trying to block the links out through rel=”nofollow”, or, for pagination, using rel=”next” and rel=”prev”. For right now, I’m just going with robots.txt because it’s the fastest way to fix the crawls.