AI is having a moment, but integrating AI into robotics is more challenging than the web browser. Unlike pure software AI, robotic AI must grapple with the physical world. Despite the challenges, AI does find its way into robots, improving how robots interact with their environments.

This is an overview of AI advancements in robotics from the 1960s to the present day, with a goal of seeing how current AI advances will be used by robots.

Thanks for reading General Shakey’s Outputs! Subscribe for free to receive new posts and support my work.

Subscribed

1960s: Early Innovations in Language Processing and Robotics

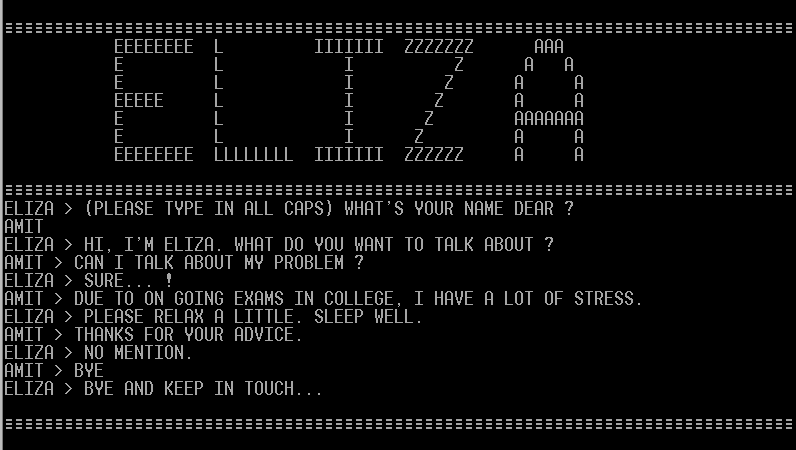

- ELIZA (1964): Pioneering natural language processing, ELIZA, created by Joseph Weizenbaum at MIT, used pattern matching and substitution to simulate human conversation.

- Shakey the Robot (1966): Shakey, developed by SRI International, was a groundbreaking robot that integrated AI and robotics. Using the STRIPS problem-solving technique, Shakey could plan and navigate its environment.

1970s: Communicating and Navigating Robots

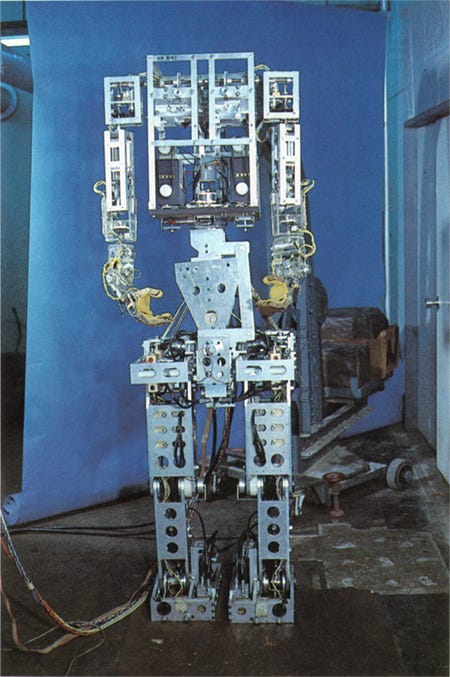

- WABOT-1 (1972): Waseda University in Japan introduced WABOT-1, the first anthropomorphic robot capable of communicating in Japanese, moving its limbs, and measuring distances and directions.

- The Stanford Cart (1979): Developed by Hans Moravec, this autonomous robot cart used computer vision to navigate its environment and avoid obstacles.

1980s: Expert Systems and Neural Networks

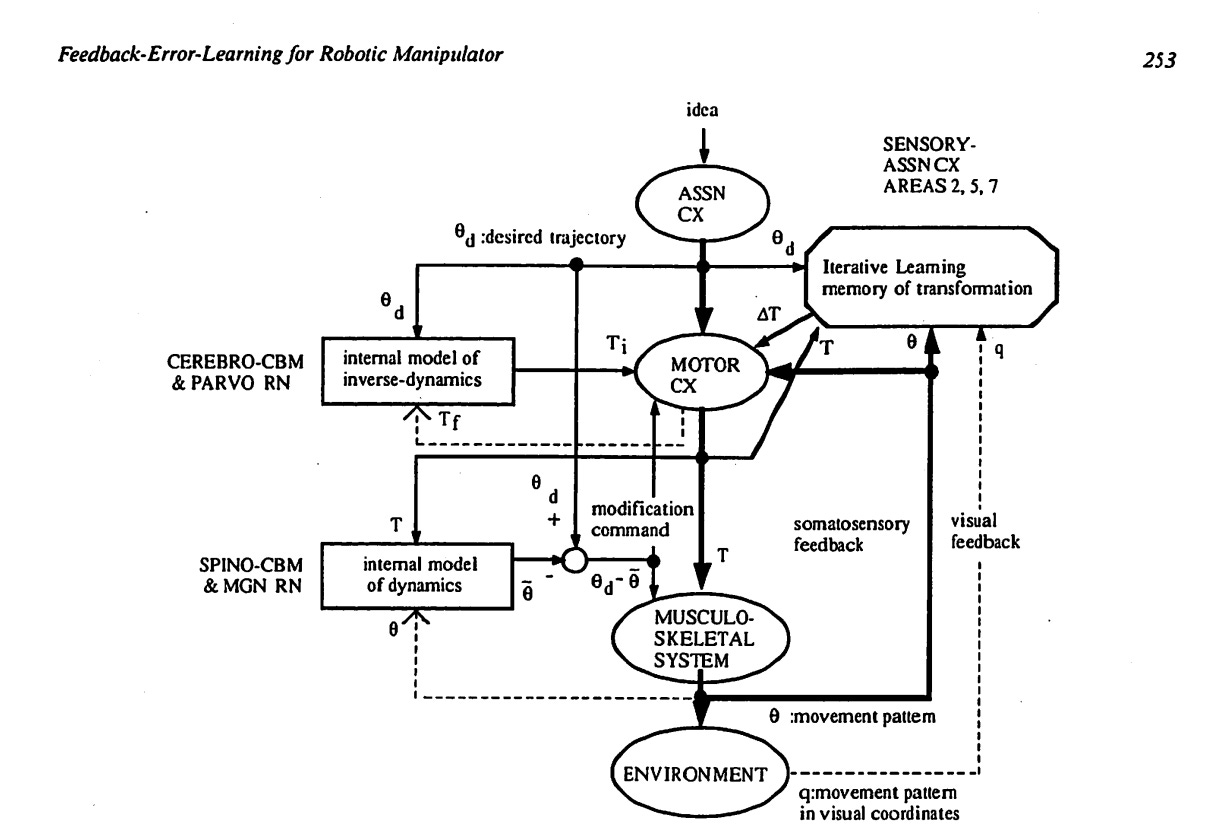

- Expert Systems: Robots benefited from rule-based expert systems, which allowed them to make decisions based on predefined sets of rules.

- Neural Networks: The advent of artificial neural networks empowered robots to learn from data and enhance their perception and decision-making.

1990s: Learning Through Trial and Error

- Reinforcement Learning: Robots could learn through trial and error using reinforcement learning, optimizing actions to reach specific goals.

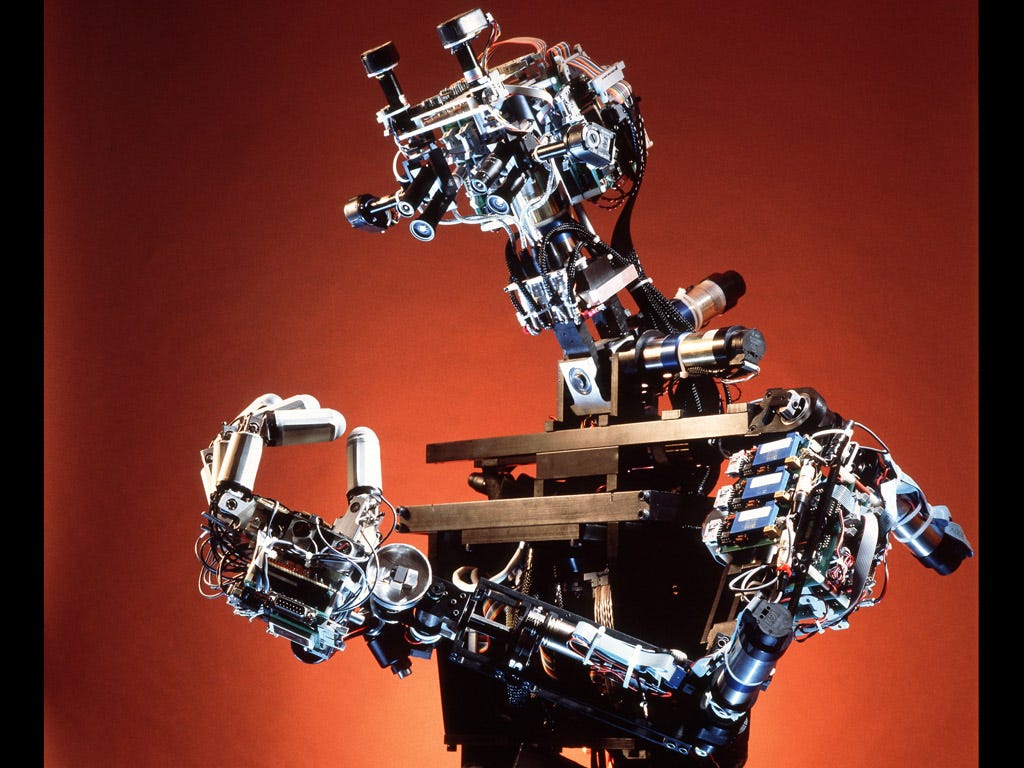

- Cog (1994): Rodney Brooks and his team at MIT developed Cog, a humanoid robot designed to emulate human cognition and learn from its environment.

2000s: Swarm Robotics and RoboCup Competitions

- Swarm Robotics: Researchers began to experiment with decentralized, self-organizing robot swarms, taking inspiration from social insects.

- RoboCup (since 1997): The RoboCup competition encouraged AI and robotics advancements through soccer matches and rescue simulations.

2010s: Deep Learning and Soft Robotics

- Deep learning: Advancements in deep learning allowed robots to process vast amounts of data for tasks such as object recognition, natural language processing, and autonomous navigation.

- Soft robotics: The development of soft, flexible materials enabled the creation of robots that can adapt to complex environments and safely interact with humans.

2020s: Large language models in robotics

- OpenAI’s GPT-4 (2022): A powerful language model that can understand and generate human-like text, enabling more natural and effective communication between humans and robots.

- AI-driven robotics in healthcare: AI advancements led to the development of robots that can assist with tasks such as patient care, surgery, and medical diagnostics.

Large language models, like GPT-4, take vast amounts of storage space and compute to inference. Models like Alpaca 7B are small enough to run on a mac air or a Raspberry Pi. This gives robots the ability to parse human language instructions into a set of commands, opening up new avenues for therapeutic robots and cobots.

The success seen in LLMs from large data sets and reinforcement learning with human feedback might provide a path forward on improving robot vision and task recognition.